Poor performance of neural network: I don't have much experience with neural networks, but I have read that inputs into neural networks should be scaled in some way - either standardised, or to lie within some narrow and consistent interval.There's no real hard and fast rule about when to choose which method. Other approaches include target encoding (or mean encoding), and the hashing trick. Handling categorical features correctly: using one-hot encoding is one valid approach.What would be a fit for purpose neural network to solve this problem with deep learning? I am concerned the ensemble methods may not be appropriate or that I am doing something wrong. Why do I have to add so many more estimators to XGBoost (10,000) to get the same performance as Random Forest (100)? Should I consider a different type of neural network for regression? Why does the neural network not perform that well? Running with 50 iterations I get accuracy at R2 0.94 is pretty good and both test and trainingĪccuracy look good and in same ballpark, so I don't think it is The neural network stalls at 82 iterations and doesn't go any further. I've used 338 neurons in the input layer as this is the exact number of columns. Print('nn test score: ', nn.score(test_features, test_labels)) Print('nn train score: ', nn.score(train_features, train_labels)) Nn_predictions = nn.predict(test_features) Nn = MLPRegressor(hidden_layer_sizes=(338, 338, 50),Īctivation='relu', solver='adam', max_iter = 100, random_state = 56, verbose = True) from sklearn.neural_network import MLPRegressor I am using a pretty beefy machine with 8 cores and 30 GB RAM on Google Cloud. According to the books I have been reading on deep learning, a neural network should be able to outperform any shallow learning algorithm given enough time and horse power. MLP Neural Networkįinally I wanted to compare performance to an MLP Regressor. In this case, train and test score R2 at ~0.94. Print('random forest test score: ', rf.score(test_features, test_labels)) Print('random forest train score: ', rf.score(train_features, train_labels)) Rf = RandomForestRegressor(n_estimators = 100, criterion='mse', verbose=1, random_state = np.random.RandomState(42), n_jobs = -1) After some fiddling it appears 100 estimators is enough to get a pretty good accuracy (R2 > 0.94) # Instantiate model with 100 decision trees Print('xgboost test score: ', x.score(test_features, test_labels)) Print('xgboost train score: ', x.score(train_features, train_labels)) X = XGBRegressor(random_state = 44, n_jobs = 8, n_estimators = 10000, max_depth=10, verbosity = 3) I needed so push # estimates above 10,000 to get a decent accuracy (R2 > 0.94) # Import the model we are usingįrom sklearn.ensemble import RandomForestRegressorįrom trics import mean_squared_error

Output: Training Features Shape: (128304, 337)įirst we try training XGBoost model. Print('Testing Labels Shape:', test_labels.shape) Print('Testing Features Shape:', test_features.shape) Print('Training Labels Shape:', train_labels.shape) Then I explore the data: print('Training Features Shape:', train_features.shape) Train_features, test_features, train_labels, test_labels = train_test_split(features, labels, test_size = 0.25, random_state = 42) # Split the data into training and testing sets Spit into SK Learn training and test set: # Using Skicit-learn to split data into training and testing setsįrom sklearn.model_selection import train_test_split

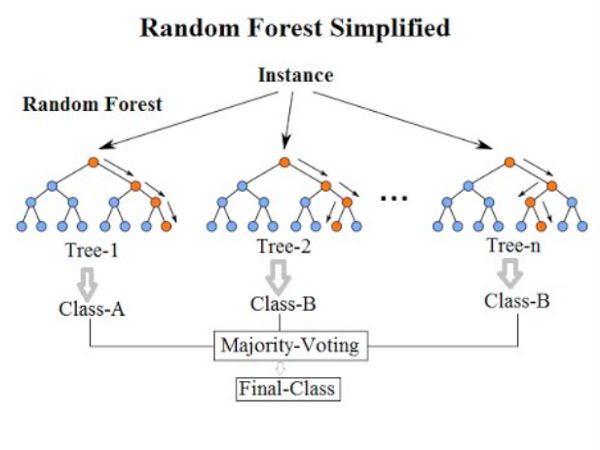

Then I isolate features from labels (TOTAL_PAID): labels = np.array(features)įeatures = features2.drop('TOTAL_PAID', axis = 1)įeature_list_no_facts = list(lumns) The majority of features are categorical: categoricals = Ĭols = įeatures = pd.read_csv('gs://longtailclaims2/filename.csv', usecols = cols, header=0, encoding='ISO-8859-1')Ĭategoricals = įirst I turn categorical values into 0s and 1s: features2 = pd.get_dummies(features, columns = categoricals) The data set has the following columns: cols = I have settled on three algorithms to test: Random forest, XGBoost and a multi-layer perceptron. Aim is to teach myself machine learning by doing. I'm building a (toy) machine learning model estimate the cost of an insurance claim (injury related).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed